If you have ever tried to import a 100MB CSV file into Google Sheets using the built-in File > Import menu, you already know what happens next: your browser freezes, the tab crashes, and you lose five minutes staring at a spinning wheel. The problem is not Google Sheets itself. It is the way your browser handles server-side CSV processing -- or rather, the way it does not.

Most people assume the import process is straightforward. You pick a file, Sheets loads it, done. But behind the scenes, your browser is doing all the heavy lifting: reading the file into RAM, parsing every row and column, rendering a preview, and then pushing the data to Google's servers. For small files, this works fine. For anything over 50MB, it is a recipe for tab crashes and lost work.

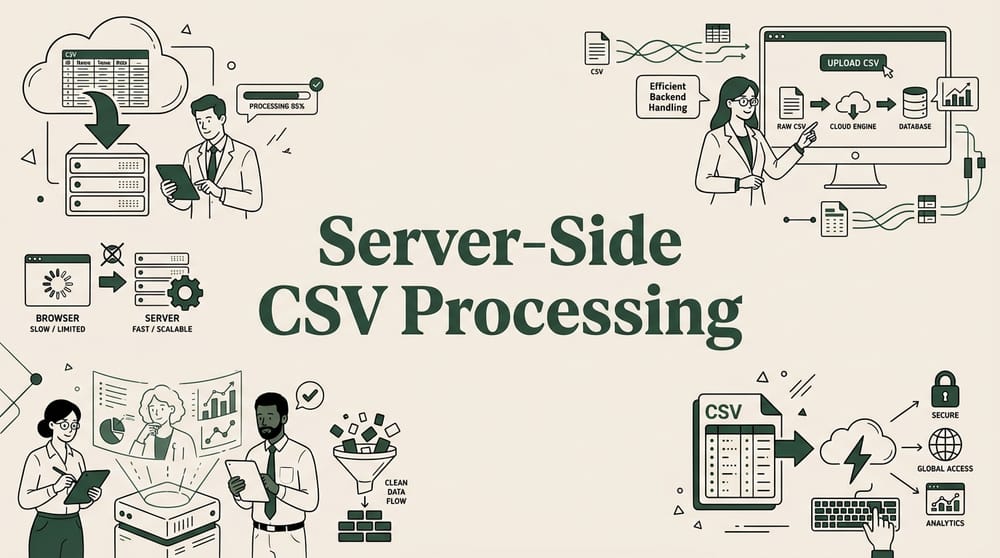

This article breaks down exactly what happens during browser-side and server-side CSV processing, why the difference matters, and how tools like SmoothSheet use server-side processing to handle large imports without crashing your browser.

Key Takeaways:Browser-side CSV processing loads entire files into RAM, causing crashes above 50MBServer-side processing handles files up to 500MB+ without touching browser memoryChrome tabs typically crash at 1-2GB RAM usage -- a 200MB CSV easily hits thatSmoothSheet uses server-side processing to import large CSVs directly into Google SheetsSmall files under 20MB work fine with browser-based imports

How Browser-Based CSV Processing Works

When you use Google Sheets' native File > Import feature, every step of the import happens inside your browser tab. Here is the actual sequence:

- File selection: You pick a CSV from your computer or Google Drive.

- File reading: The browser reads the entire file into memory using the FileReader API. A 100MB file means 100MB+ of browser RAM consumed immediately.

- Parsing: JavaScript in the browser tab parses the raw text -- splitting rows by line breaks, splitting columns by delimiters, handling quoted fields. This creates additional objects in memory, often 2-3x the original file size.

- Validation and preview: The browser renders a preview of your data, detects column types, and checks for obvious errors.

- Upload to Google: Finally, the parsed data is sent to Google's servers and written into your spreadsheet.

The critical problem is step 2 and 3. Your browser tab has a finite amount of memory -- Chrome allocates roughly 1-2GB per tab on most machines. When the CSV file plus its parsed representation exceeds that limit, the tab crashes. You get the infamous "Aw, Snap!" error or the file simply fails to load without explanation.

This is not a Google Sheets limitation. It is a browser memory limitation. Google Sheets itself can hold up to 10 million cells per spreadsheet. The bottleneck is getting the data through the browser and into Sheets.

Why Browser Processing Fails with Large Files

Browser-based CSV processing creates a memory multiplier effect. Here is why a 100MB file does not just use 100MB of RAM:

- Raw file buffer: The original file content sits in memory (~100MB)

- String conversion: Converting bytes to JavaScript strings roughly doubles the memory footprint because JavaScript uses UTF-16 encoding internally (~200MB)

- Parsed objects: Each cell becomes a separate JavaScript object with metadata. For a file with 500,000 rows and 20 columns, that is 10 million objects (~150-300MB of overhead)

- DOM rendering: If the browser tries to preview even a subset of rows, it creates DOM nodes that consume additional memory

Add it up: a 100MB CSV file can easily consume 400-600MB of browser memory. A 200MB file pushes past the 1GB mark, and a 300MB file is almost guaranteed to crash the tab.

Mobile browsers are even more constrained. Safari on iOS typically limits tabs to 500MB-1GB of memory. If you are trying to import a large CSV on a tablet or phone, the threshold for crashes drops to around 20-30MB.

There are also CPU bottlenecks. Parsing a million-row CSV in a single browser thread can take 30-60 seconds, during which the tab appears frozen. Users often assume the import has failed and close the tab -- losing the entire process. If you have ever hit this wall, our guide on uploading large CSVs without browser crashes covers several workarounds.

How Server-Side Processing Works

Server-side CSV processing flips the entire model. Instead of your browser doing the work, a dedicated server handles every step of the import. Here is what happens when you use a server-side tool like SmoothSheet:

- File upload: Your CSV file is uploaded directly to a processing server. The browser only handles the upload stream -- it does not need to read the entire file into memory at once.

- Server-side parsing: The server parses the CSV using optimized, compiled code (not browser JavaScript). Servers have 8-64GB+ of RAM and multi-core CPUs, so even a 500MB file is trivial to process.

- Streaming to Google Sheets: The server writes data directly to your Google Sheets spreadsheet via the Google Sheets API. Data flows server-to-server, bypassing the browser entirely.

- Progress updates: Your browser just displays a progress bar. The actual data never passes through the browser tab.

The result: your browser tab stays responsive, uses minimal RAM (just the add-on UI), and can handle files that would instantly crash a browser-based import. SmoothSheet processes files up to 500MB at a flat rate of $9/month, with no per-file charges or row limits.

The key difference is where the bottleneck lives. In browser processing, the bottleneck is your browser's memory and single-threaded JavaScript engine. In server-side processing, the bottleneck is the server's resources -- which are orders of magnitude larger. Before importing, you can use the Google Sheets Limits Calculator to verify your data fits within Sheets' 10-million-cell cap.

Real-World Performance Comparison

To make this concrete, here is how browser-based and server-side processing compare across different file sizes. These numbers are based on typical performance with a standard laptop (8GB RAM, Chrome browser) versus server-side processing through SmoothSheet:

| File Size | Rows (est.) | Browser Import Time | Browser Crash Rate | Server-Side Import Time | Server Crash Rate |

|---|---|---|---|---|---|

| 10MB | ~50,000 | 15-30 seconds | <5% | 10-15 seconds | 0% |

| 50MB | ~250,000 | 1-3 minutes | ~25% | 30-60 seconds | 0% |

| 100MB | ~500,000 | 3-8 minutes* | ~60% | 1-2 minutes | 0% |

| 200MB | ~1,000,000 | Fails almost always | ~90% | 2-4 minutes | 0% |

*When it does not crash. Many users will experience tab crashes before the import completes at this size.

The pattern is clear. Browser processing works reasonably well up to about 30-40MB. Between 40-100MB, it becomes unreliable -- sometimes it works, sometimes it crashes, depending on how many other tabs you have open, what extensions are running, and how much RAM your machine has. Above 100MB, browser imports are effectively broken for most users.

Server-side processing stays consistent regardless of file size because the processing happens on dedicated infrastructure. The only variable is upload speed -- on a decent internet connection, even a 200MB file uploads in under a minute.

If your files are too large even for Google Sheets' 10-million-cell limit, the CSV Splitter can break them into manageable chunks before import.

When Browser Processing Is Fine

Server-side processing is not always necessary. Browser-based imports work perfectly well in these scenarios:

- Files under 20MB: At this size, even mobile browsers can handle the import without issues. The memory footprint stays well within safe limits.

- One-off imports: If you are importing a single small file once, the built-in File > Import menu is the fastest path. No add-on needed.

- Quick previews: Sometimes you just want to glance at the first few rows of a CSV. Google Sheets handles this fine for any file size since it only loads a preview.

- Files with few columns: A 30MB file with 3 columns creates far fewer JavaScript objects than a 30MB file with 50 columns. Column count matters more than people think.

The tipping point is around 20-30MB for most users. Below that, browser imports are fast and reliable. Above that, you are rolling the dice -- and the odds get worse with every additional megabyte. For anything you import regularly or anything above 30MB, server-side processing saves real time and frustration.

The Future of Spreadsheet Data Processing

The shift from browser-side to server-side processing is part of a bigger trend in how spreadsheet tools handle data. Here is where things are heading:

Connected Sheets and external data: Google's BigQuery Connected Sheets already lets enterprise users query billions of rows without loading data into the spreadsheet at all. The data stays in BigQuery and only the results appear in Sheets. This is server-side processing taken to its logical extreme.

Server-side add-ons becoming the norm: The Google Workspace Marketplace increasingly favors add-ons that process data server-side. SmoothSheet is part of this wave -- handling the heavy lifting on servers so your browser just manages the interface. As files get larger and data workflows get more complex, this architecture becomes essential rather than optional.

AI-powered data processing: The next frontier is AI-assisted imports -- automatically detecting column types, cleaning messy data, and suggesting transformations before data even reaches your spreadsheet. This requires server-side compute that browsers simply cannot provide.

Edge computing and WebAssembly: On the browser side, WebAssembly (Wasm) is closing the performance gap for smaller files by running compiled code in the browser at near-native speed. But for files above 100MB, the fundamental RAM constraint remains -- no amount of faster parsing helps if the data does not fit in memory.

The bottom line: browser-side processing is not disappearing, but it is increasingly limited to small, simple imports. For serious data workflows -- especially those involving files over 30MB or recurring imports -- server-side processing is the future, and it is already here.

FAQ

What is the difference between server-side and browser-side CSV processing?

Browser-side processing loads the entire CSV file into your browser's memory, parses it with JavaScript, and then sends it to Google Sheets. Server-side processing uploads the file to a dedicated server that parses and streams the data directly to Sheets via API, keeping your browser lightweight and crash-free.

Why does my browser crash when importing large CSV files?

Your browser tab has a memory limit of roughly 1-2GB. A 100MB CSV file can consume 400-600MB of browser memory due to string conversion and object creation during parsing. When this exceeds the tab's memory allocation, Chrome displays an "Aw, Snap!" error and the tab crashes. Files above 100MB crash most browsers consistently.

How large of a CSV file can SmoothSheet handle?

SmoothSheet uses server-side processing to handle CSV files up to 500MB. Because parsing happens on dedicated servers with significantly more RAM and CPU than a browser tab, there are no browser crashes or freezes. The only limit is Google Sheets' own 10-million-cell cap.

When should I use browser-based import vs server-side processing?

Use Google Sheets' built-in File > Import for CSV files under 20MB -- it is fast and reliable at that size. For files over 30MB, recurring imports, or any situation where browser crashes have been an issue, switch to a server-side tool like SmoothSheet. The large CSV handling guide covers additional strategies for oversized files.

Conclusion

The difference between browser-side and server-side CSV processing comes down to one thing: where the work happens. When your browser does the parsing, you are limited by tab memory, single-threaded JavaScript, and the hope that Chrome does not crash before the import finishes. When a server does the parsing, those constraints disappear.

For small files, browser imports are perfectly fine. For anything over 30MB -- or for recurring imports where reliability matters -- server-side processing is not just faster, it is the only approach that consistently works. SmoothSheet handles this at $9/month with no file limits, no row caps, and zero browser crashes. If large CSV imports are part of your workflow, it is worth trying.